I've been living with a problem for over a year now, and honestly, I was starting to think it was just the price of creating images with generative AI.

Anyone who's tried to create consistent visual content with AI knows what I'm talking about.

You describe your character perfectly, hit generate, and get... close. Maybe the glasses are wrong. Maybe the hair's a different color. Maybe your carefully crafted protagonist suddenly looks like a completely different person.

A lot of people call it a crapshoot.

You get "good enough" content and you roll with it because, hey, at least it's fast and cheap.

I'll admit it — most of the time, if an image looks great on the first try, I just go with it. If it's good enough for me scrolling through my own feed, it's probably good enough for someone casually browsing by.

But some things aren't negotiable.

The Details That Matter

My creative work revolves around an alternate reality version of Portland called Lexicon City, where I'm represented by an alter ego called the Man in Yellow Sunglasses. My dogs and I are part of this crew (The Daring Creatives) exploring the near future, and every visual detail matters for the story.

The Man in Yellow Sunglasses needs chunky yellow frames, not aviators. Sherman, our dispatcher who sends out intel that drives the story forward, always wears red goggles — we never see his eyes — and he's got the world's cutest nub for a tail. Those details aren't just aesthetic choices. They're my brand!

So when Sherman shows up with a long tail and no goggles, or when the man in yellow sunglasses is wearing aviators and has no hat, I know I'm spinning the wheel again (and might be in for a long night).

This was happening constantly...

I'd generate five images and maybe one would have the right details. The rest would be close but wrong in ways that irritated me.

Enter Claude Code

I used Claude Code to build character sheets and brand style guides, then shared detailed information about this creative universe. Individual images of specific assets — the exact style of sunglasses, the goggles, even clothing styles. I fed it everything that mattered for consistency.

I'm using Nano Banana through the Gemini API to generate these images, and they usually look good. But here's the thing I learned: you can do retouching through the API.

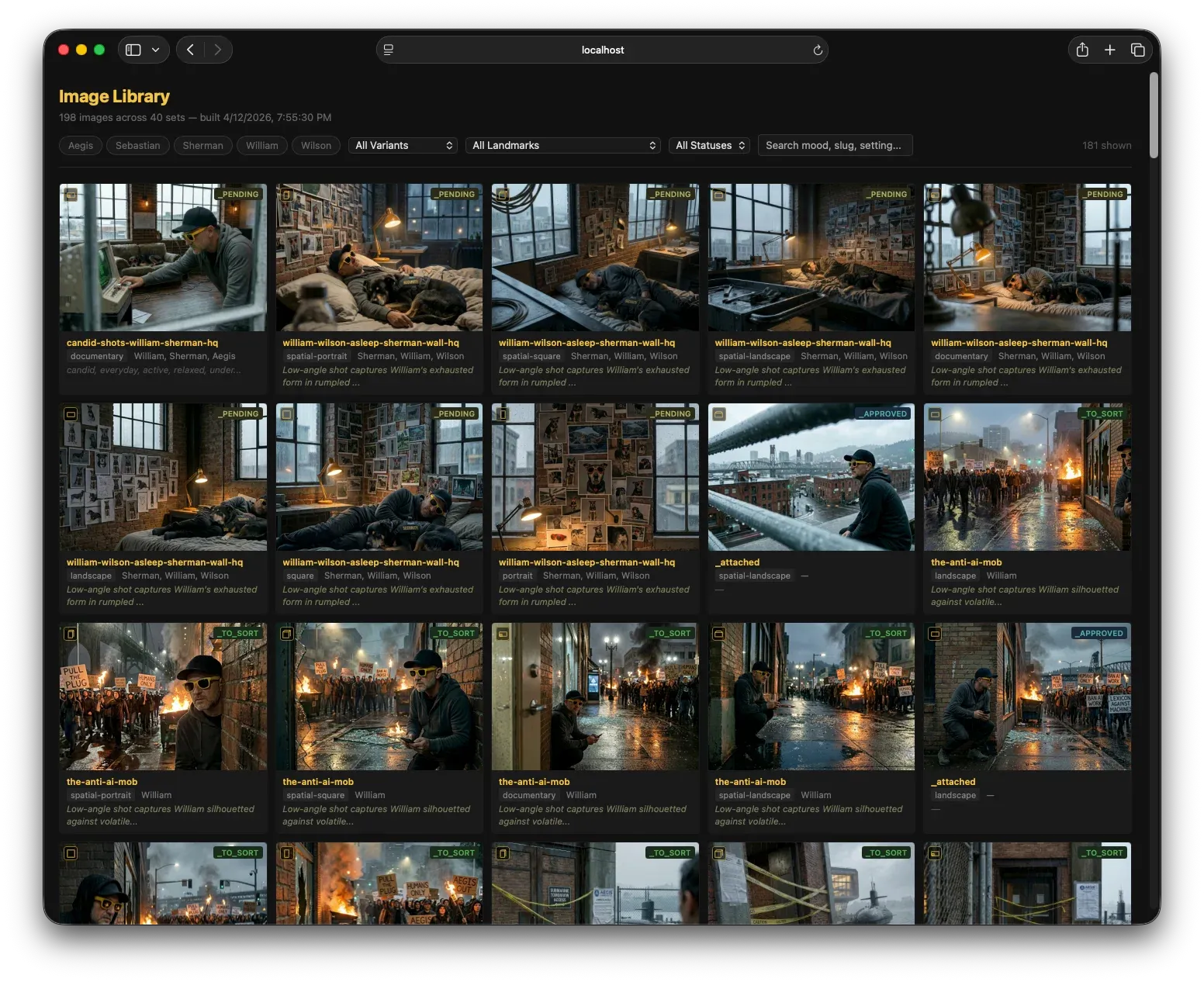

So now I have Claude generating candidate images, reviewing them, scrutinizing how close or far away they are from the style guide, and then retouching them until they pass inspection.

It takes longer than just accepting whatever comes out first. But it happens while I'm sleeping, or every 10 minutes on a scheduled cron job.

The System in Action

Claude generates multiple candidates (using Nano Banana), compares them against the character sheets, identifies what's wrong (wrong glasses, missing details, inconsistent styling), and then retouches until they match the brand guide.

I can go back and look at all the discarded candidates to make sure it's working properly. But ideally, I just want to see consistent images from the start — and those are the ones that get bumped to the top of the list for me to review.

With Anthropic's new Claude Managed Agents launching this week, I'm probably going to rebuild this whole system to be even more robust. The idea of having a persistent agent that's always watching for consistency issues and learning from each iteration? That's exactly what this workflow needs.

Here's a tip if you want to deploy something like this:

Have Claude do the QC on image quality, not Gemini. Initially, I had Gemini handling image generation and QC and ran into issues. I was essentially asking Gemini to grade itself on the job it did. When I split that function off to Claude to do, my rate of improvement spiked dramatically. That, and I expanded the number of retouch attempts we'd make per image from 2 to 4.

Here's Why You Should Care About This

If you're creating any kind of ongoing visual content where consistency matters — whether it's characters for a story, products for marketing, or just a cohesive aesthetic for your brand — this approach works.

The key insight wasn't that AI image generation is broken. It's that AI can also fix AI. You just have to teach it what to look for and give it the tools to iterate until it gets there.

Now instead of spending my time generating and regenerating images, I spend it on the creative decisions that actually matter. The AI handles the consistency checking. I handle the story.