I've been getting questions about how I actually produce content here, so I thought it was time to just show it.

This is a breakdown of the full system — the tools, the structure, how ideas move from a voice note to a published post, and which parts are automated versus which parts still need me in the room. It's technical in places, but I'll try to make sure a beginner can follow the logic even if they've never touched a terminal.

The Three Tools Doing Most of the Work

The backbone is three tools working together: Obsidian, Ghost, and Claude (specifically Claude Code and Claude Cowork).

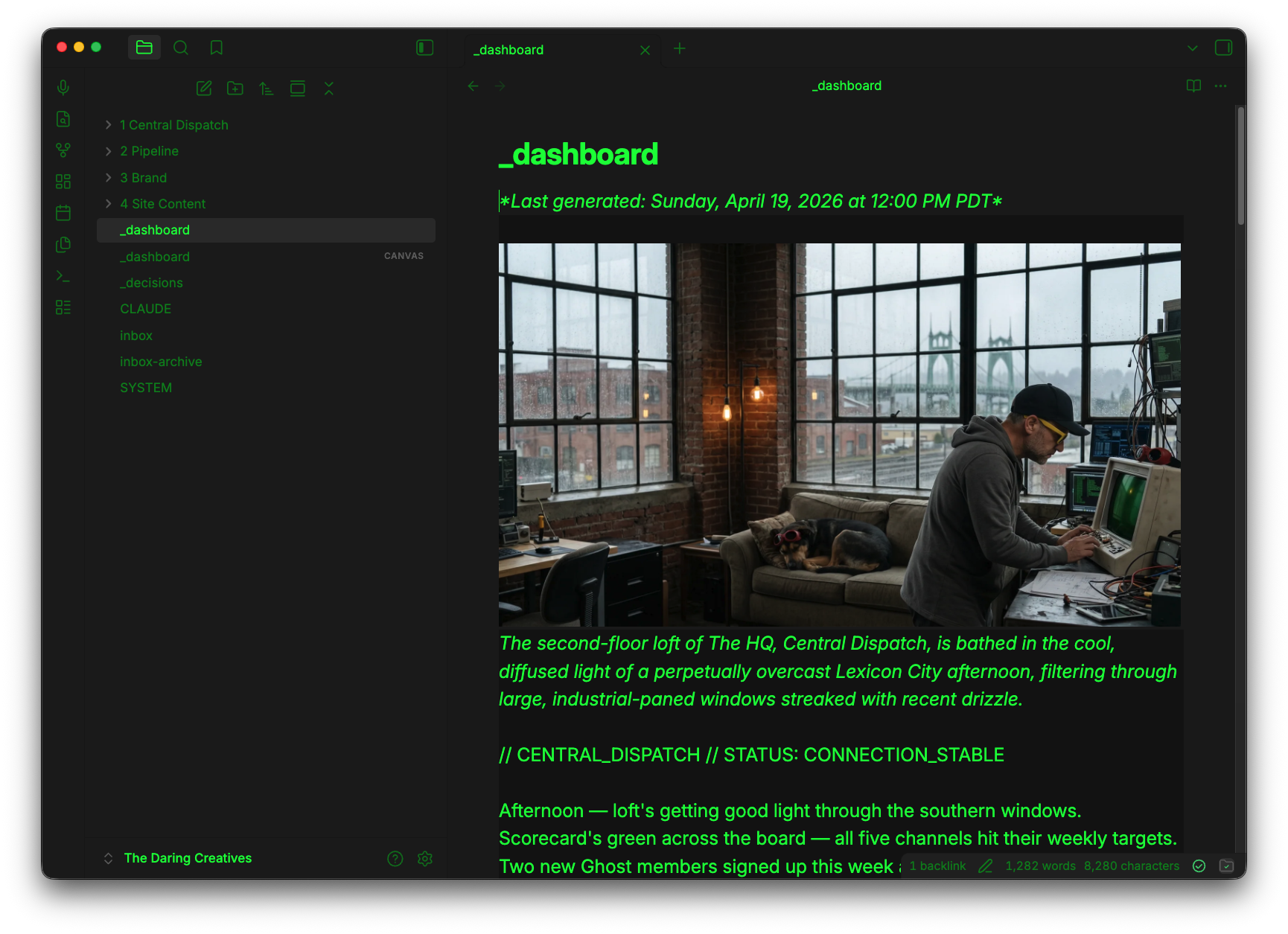

Obsidian is where everything lives. It's a local markdown vault — basically a folder of plain text files that Obsidian gives you a nice interface for. I use it as the source of truth for all content in various stages. Drafts, final articles, brand notes, the content pipeline itself — it all lives in the vault.

Ghost is the CMS. It handles the public-facing site, member subscriptions, and email newsletters. Importantly, it has an Admin API, which means I can push content to it programmatically without touching the browser.

Claude is the AI layer. I use two modes of it: Claude Code runs scheduled automated tasks from the terminal (fetching news, running analytics, processing the content queue), and Claude Cowork is the interactive session where I draft, edit, and strategize in real time.

How the Vault Is Organized

The vault has a few key zones. The main ones for content:

The Pipeline is where raw inputs go — voice transcripts, quick notes, links I want to turn into something. I drop things here and the system picks them up.

Central Dispatch is where drafts land after the AI (Claude) processes them. This is where I read, edit, and either approve or ask for revisions.

Drafts is an untouched copy of every AI draft at the moment it was created. I never touch this folder. It's the baseline I diff against later to calculate the Transparency Protocol.

Ghost Outbox is the publishing queue. When I approve something, it gets reformatted and dropped here. A scheduled script picks it up and pushes it to Ghost as a draft, then cleans up behind itself. This part may be redundant, but for now it is what it is.

There's also a Raw folder where original voice transcripts are archived after they've been processed. This also allows me to calculate the Transparency Protocol, as it shows my raw contribution to each thing we make.

The Custom Instructions Layer

The vault has a CLAUDE.md file at the root. This is Claude's project context — it tells the AI what the site is, how the content pipeline works, what the voice rules are, where everything lives, and what to do at the start of each session.

There's also a voice-reference.md inside of my Brand folder file that gets updated whenever I make significant edits to AI-drafted content. My edits get analyzed and the patterns get documented — things like "he cuts the clever comparison and just says the thing straight" or "he inserts himself into observations." The AI reads this before writing anything. It's a living style guide built from actual evidence, not vibes.

This is the part I think most people skip when they set up an AI writing workflow. They'll use a system prompt or a one-liner about tone. A detailed voice reference built from real edit patterns is genuinely different — and it compounds over time.

How an Idea Becomes a Post

The actual flow looks like this:

- I dump something into a Drop Zone folder inside of Pipeline. Could be a voice transcript, a few sentences, a link with my quick reaction. Usually happens while I am on a walk, but also can happen when i'm in the car (not driving of course) or even in the shower (where some of the best thinking happens)

- A scheduled Claude Code task runs (I have it set to run automatically) and checks the Drop Zone. If there's something new, it examines the context I left it, drafts a full article, saves identical copies to both For Review and Drafts within Central Dispatch, then archives the original in Raw.

- I open For Review in Obsidian and read the draft. I edit directly in the file so I am effectively just editing a simple note file. When I'm happy with it, I change the

Status:line fromDraft — needs William's reviewtoApproved. - The scheduled task picks up the approval. It diffs the For Review version against the Drafts baseline to calculate the Transparency Protocol (the human/AI contribution split), strips all the pipeline metadata from the body, injects the TP widget, reformats the file as YAML frontmatter, and drops it in Ghost Outbox.

- The publish script runs, pushes the file to Ghost as a draft via the Admin API, and cleans up the Outbox and For Review folders.

- I do a final review in Ghost, add a featured image, and hit publish.

The whole process from raw idea to Ghost draft can be a few minutes of my time total — the rest is automated.

What Claude Code Does vs What Cowork Does

This is a distinction that matters.

Claude Code handles the automated, scheduled work. It runs on a timer and doesn't need me present. It fetches the AI news feed every morning, pulls Google Analytics and Ghost newsletter data into a weekly report, and runs the content pipeline check. These are all Node.js scripts in a .config/ folder in the vault. I can also trigger them manually from the terminal.

Claude Cowork is for the sessions where I'm actively in it — brainstorming, going deeper on a draft, making decisions about direction, asking questions about the site. It has access to all the same vault files, so it's working with the same context as Code. But it's conversational and collaborative rather than automated.

The way I think about it: Code runs the system. Cowork is the partner I think out loud with.

How the System Tracks Performance

I have a Content Intelligence report that gets generated weekly. It pulls GA4 data (page views, traffic sources, engagement time) alongside Ghost newsletter data (email opens, click rates, member tier breakdowns) and outputs a single markdown file in the vault.

Claude reads this at the start of each session. So when I'm drafting something, it already knows which topics are resonating, which posts are under-promoted, where my traffic is coming from. This makes the system self improve (creates a simple feedback loop).

That part took me a while to build and is still evolving. But the principle is simple: the system should know what's working, not just what exists.

Why I Built It This Way

Honestly, I built it because there's a bunch of content work I find genuinely tedious. Reformatting files for publishing, pulling analytics, chasing down whether I remembered to archive something. Basically a bunch of stuff I would rather not do.

The parts I actually enjoy — thinking through an idea, adding to something thats already 80% there (and good), building the universe around the site — those I want to spend more time on, not less. So the automation is pointed at the parts I don't want to do, not the parts that make the work worth doing.

It's also a transparency project.

Every post on this site includes a Transparency Protocol — who wrote what, what the AI contributed, what stack was used. The system calculates that automatically based on a real diff of the drafts. The number is honest because it's computed, not estimated.

I don't think AI should make you a ghost.

I think it should make you more yourself, with more time to actually be yourself. That's the version I'm building toward.